Playing a game like inFamous, Bioshock, Dragon Age, Star Wars: The Old Republic, or any game that has a morality system built into the game has been a bit strange for me. They build stories where you get to choose how your character’s life should play out, with dozens of opportunities to piss off the wrong people because they have punchable faces or act like skidmarks on your underwear where regardless of how clean you’ve been they still appear, moments that make you want to change sides because your emotions get in the way causing you to ally with a faction with a sympathetic background or because a character that you’ve grown fond of was killed by one’s hands.

But all of those opportunities are useless. Not because I’m so detached to the struggles that the characters in the story exhibit, nor because the storytelling did a crappy job of getting me attached to the characters within the story so actions against them wouldn’t cause some emotional reaction.

It’s because when the game started, I decided that this playthrough my player would be the Paragon, always choosing the morally “right” thing to do.

By picking a side and sticking to it, my actions are predetermined regardless of how bad the situation got to the characters in the game. No matter what kind of emotional response I would have because my favorite ship was getting tortured, chaos the villain was causing, betrayal that my best-friend would cause.

The emotional stress that any of this would cause me normally would be completely disintegrated because I knew that my actions were already predetermined. I would be the Predetermined Paragon for this run of the game.

But why does choosing this even matter? Does the canonical story assume that the player would be a Paragon of goodwill, ethics and morality pulling from an infinite pool of patience and persistence until they succeed? Perhaps.

A question as important: why does it cause such emotional stress in the first place?

More after the break.

The Two Towers of Emotion

There’s conjecture in Cognitive/Behavioral Psychology where our response to situations changes depending on the context of which you make those decisions. The way we make decisions is “state-dependent,” meaning that our decision making is based on our current emotional state and makes it hard to rationalize making a different decision if we’d been in a different emotional state, leading to an empathy gap (https://en.wikipedia.org/wiki/Empathy_gap). It comes off, metaphorically, as not judging people unless you’ve walked a mile in their shoes.

Think of a situation like this. You’ve just gotten through a bad breakup, so you start to take the dating scene slowly. You’re picky about the people that you want to get involved with because you want that next person to be better than the last. But the dating scene has gotten worse since you last dated. You’re not in school anymore, it’s harder to meet people interested in the same things and it takes a lot of work just to meet new people for prospects of dating. Now it’s been over a year, you haven’t had sex since and you’re at the point of just grabbing anyone with a modicum of shared interest. Nah, you just hope that you find someone that’ll at least have a fling with you. It’s been awhile since someone else has touched your special parts and you’re just looking for someone who can do that.

The desperate you is almost a different person than the subsided you.

The more desperate of situations that you’re in, the likelier you are to loosen your moral and ethical boundaries, or make riskier decisions because you only have your immediate goals in mind.

The empathy gap, in question, is the fact that when we are at a normal state, we don’t know just how much we will bend our morals given the desperation. We think that we will bend them a little bit, but in actuality we can bend them into a right angle given enough need.

Don’t believe me? Throwing around wild anecdotes? Thankfully we’re on Bias in Gaming, so expect this to be sourced.

How likely are you to practice things like safe sex? How adventurous are you in bed? Nipple rubbing? Feet licking? Would you have sex in the car, if it were in a dark parking lot where your car was the only one there? What about if that car were in a bar parking lot still late night? What if we moved that car to the mall parking lot, midday in the parking structure? What if it was your dream-crush guy, girl, beast-thing asking you to do it?

Keep those answers in mind. What if you were fooling around with someone and they asked you all of those same things. No condom with you, would you still be ready to go? Maybe it’s time for you two to get kinkier than normal.

There was a study that did just that. Answer a few questions about sexual habits, proclivities and responsibilities. Now watch porn for a while. Not to completion, but enough to get you all hot and bothered and try answering those questions again.

You know what they found out? That across the board we are more open to riskier sexual behaviors when we’re in the mood of sex. In conjunction with that, we are also terrible judges of predicting our behavior when when turned-on if asked to predict while not turned-on.

Ariely, D.; Loewenstein, G.F. (2006). "The Heat of the Moment: The Effect of Sexual Arousal on Sexual Decision Making".Journal of Behavioral Decision Making 19: 87–98. | http://onlinelibrary.wiley.com/doi/10.1002/bdm.501/abstract;jsessionid=6EB707B80AFFD233C315648436D36516.f04t03

More generally, we are bad at predicting our actions in the hot state while we’re in the cold state. Emotional reactions are hard to predict when we’re in a cognitive/rational state.

Games are bad at putting us in these situations, though they try to. They give us morality systems and ask us how our character should react, but then they give us feedback like “this action is a ‘good action'” or “only a bad guy would do this, are you sure?” Which completely undermines the idea of testing our morality in the first place.

This is where a game like SOMA (2015) tests our morals in an organic way. Trapped in an underwater base, the Earth’s surface is destroyed and the crew of an underwater base is being absorbed by the borg in an effort to keep the human race alive. You find the remnants of people being left on life-support, or finding consciousness recorded on hard-drives living in perpetual agony when they get booted back to life. In the midst of a dozen or so tragic stories you’re asked, “What would you do?” Would you kill them so they won’t suffer any longer? There’s no more hope for them, considering they can’t go with you to your destination, and they can’t exactly live the way that they are.

SOMA tests your morality, giving you impossible choices and letting your emotions take over, letting the hot-state make the choices for you. But there’s no real right or wrong answer to these tests. The questions asked in SOMA and the answers that you give are in a morally and philosophically grey area where even if you make your choices while in the hot-state, you go back into the cold-state and wonder if you would’ve still made the same choice. You get to live with the consequences of those actions and have it clawing on your subconscious for a while.

Our ideas of how we react goes well beyond emotional responses to events and branches out to how we view stress and identity.

By stress, I mean that we don’t gauge how well people can perform under stress until we are actually put in those situations. Again, an empathy gap bias. We think that the more stress we put on someone the better that they will perform but anyone who has taken an SAT or came into a test with less than complete confidence knows that this isn’t always the case.

When we’re put in stressful situations, we don’t always have the concentration to focus on the things that we should be worried about. Letting your mind wander about that girl you’ve been flirting with in the coffee shop, how you really need to pass this test because your passing grade for the class is depending on it, or playing well these next two games for that scholarship to university that you need. Your head isn’t focused on what it should be. The rewards are just too high, they’ve become a distraction.

In fact, the higher the rewards and the more noticeably present, the more of a distraction it can be to your performance.

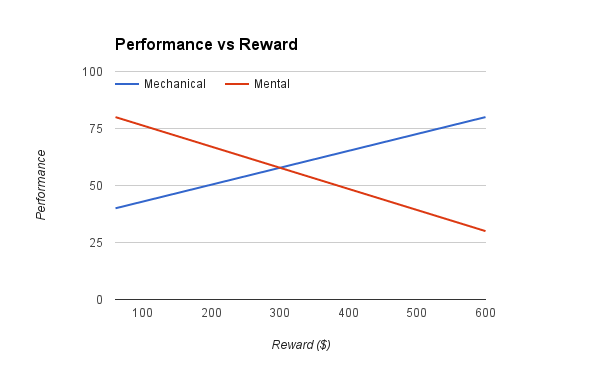

The above are results from one of a series of studies that Professor Dan Ariely performed to test this hypothesis. They tested this out by asking people to perform one of two tasks, repetitive keystrokes (a mechanical task) or adding a bunch of numbers (a mental task), with a varying reward incentives. Some would be rewarded $60, some would be rewarded $600 depending on how well they performed at their task.

The gist is that people performed better at the mechanical task as the reward incentive increased, but performing mental tasks had a different correlation. The higher the reward incentive, the worse they would perform at the mental task. The rewards became too distracting that they were more focused on the rewards than the task, leading to worsened performance.

Ariely D (2008) What’s the Value of a Big Bonus? | http://danariely.com/2008/11/20/what%E2%80%99s-the-value-of-a-big-bonus/

The point is that to keep us in our head, focused more on the outcome than the task at hand, is a bias that could be handled better in gaming. In the heat of the moment, like being in the hot-state, we focus on the wrong things. We focus more on the things that we immediately crave, instead of the thing that is better for us in the long run. Like walking by a fresh pizza cooking in a brick oven when on day 2 of your diet that you’re imposing after gaining a few too many pounds over the holidays. A pizza never smelled so delectable.

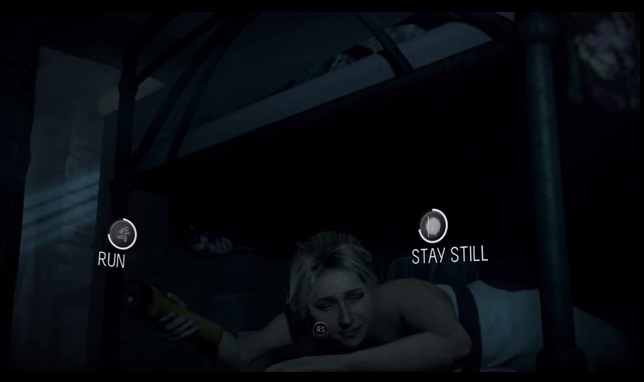

SOMA exercised our decision making about the corrupted lives around us without judgment, but a game like Until Dawn exercised our decision making while in complete distress. Your friends are starting to die all around you, you’re being chased by a monster and a killer, and you’re put in the situation to either hide in silence or try to make a run for it. Which would you choose?

The game opens up with impossible-to-know choices and, like SOMA, forces you to live with those consequences. Unlike SOMA, there is little grey area to your choices. You could’ve saved nearly every character in Until Dawn and it was your rushed decision making that caused their deaths. But how were you to know?

The game does a great job at keeping the stress high and making the moment-to-moment decision making time-limited so you’re not given the time to overthink your choices. You make the choice that feels right with the limited knowledge that you can remember at the time and hope for the best.

It helps that there’s no visual representation for the consequences of your actions, silently judging you by putting a label of right or wrong as you make those actions. Other than the potential immediate demise of the characters in Until Dawn, I mean. This is probably where games with choice screw up the experience the most. InFamous, Dragon Age, The Old Republic. They diminish the experience by prejudging your actions, giving you extra incentive to “do the right thing”. At least give me the option to play without judgment. Or pull a BioShock and judge me at the end of the game, a hidden surprise for skewing my judgments while playing.

Even with the immediate moral representation when making an action, as long as there’s someone there to judge your actions you’ll feel the repercussions of right or wrong decision making.

In general, if we can attribute individuals to suffering because of our actions or inactions, then we have a harder time making choices that go against that individual.

“A single death is tragedy; a million is a statistic.” The Poster-Child Problem. If we can personalize a single individual, we become more empathetic to their suffering and are willing to help out more. The Identifiable Victim Bias

If you were given information about the millions of deaths caused by starvation in Africa, would you be willing to help? How much do you think you’d be willing to donate? Do you think that your money would be enough to help everyone out?

How about, instead, we give you information about a single child that is starving in Africa. How much do you think you’d be willing to donate? Does having information about this one kid give you a more personal connection to the suffering than before?

In a study reported in 2005, it was found that people were willing to donate twice as much when given a more personal relationship to the suffering than just given the raw statistics about the suffering.

What’s as interesting is that if presented with both personal and statistical information, people would donate less than if given only the personal information. Something about statistics and analytics shuts down the emotional, empathetic abilities and makes us less emotionally invested in a situation.

Small D. Loewenstein G., Slovic P. (2005) "Sympathy and callousness: The impact of deliberative thought on donations to identifiable and statistical victims" | http://www.cmu.edu/dietrich/sds/docs/loewenstein/SympathyCallous.pdf

A game like Dragon Age balances the Identifiable Victim Bias well. Not all choices that you make in Dragon Age are about good or evil, but about politics and strategy. You’re making decisions about showing force or choosing who should have priority in help, which are all analytic and strategic, but in the midst of this you’re balancing your relationships with your council, members of the various tribes and cities nearby each with a pre-existing history of war, deception, or treatise with one another. Then you come along, swinging your pecker around, trying to show that you can maintain your camp, but also need to be in good standings with those that you work with.

The problem is that you can’t just do what you think is strategically right all of the time. Your council is also liaison to their village, their people, and anything that you do to affect those people (good or bad) is directly visualized by your compatriots. They become the Victim by Proxy that personalizes your actions, coercing you to make decisions that are for or against a certain people because you want to keep in their good-standings.

It doesn’t help that you keep trying to get into their pants. Oh, the decisions that we make because of our genitals. Genital Choices.

Having a Victim Proxy will always shift our choices because we have a person that we see immediately affected by our actions. The Little Sisters in BioShock. Your girlfriend Trish or best-friend Zeke giving you the a scolding, voicing their unending disappointment in you in inFamous. Always swaying the vote, even a little bit.

Self-Control and When to Betray It

Say you’re playing a good-guy only run of a game. Should there be repercussions because of picking a side so vehemently, other than repercussions to the story?

The problem with picking one side is that there should always be a reason to cross the fence. The grass should be always seem greener on the other side. This is where true temptation lies and what makes a compelling story. The temptation of The Dark Side in Star Wars should always be about taking the easy way out, which makes staying the course on the Light Side seem more nobel. After having countless tragedies happen, maybe controlling the powers of The Dark Side would make life easier to deal with. No more suffering of your loved ones. Some other Dark Side nonsense.

It’s the practicing of your Self-Control that keeps you on the side that you’re on, and the depletion of that Self-Control that makes it all the more tempting to join the other side.

There’ve been a few studies showing that willpower and self-control is a limited resource in our day which gives weight to the kinds of decisions that we make throughout the day. The more decisions that we make to things that we’re tempted by or on the fence about, the more willing we are to cave against our “better judgment.”

"Is Willpower a Limited Resource?" | https://www.apa.org/helpcenter/willpower-limited-resource.pdf

Take a game like BioShock. A game with only a finite amount of Adam to help unlock your arsenal of powers and the only way to get more of them is to kill the Little Sisters. If you really wanted to make the temptation to kill the Little Sisters seem easier to rationalize, you’d make the rewards for killing them all the more generous. The game should get drastically easier by killing the Little Sisters, instead of unlocking your powers a little bit earlier than the normal course.

Actually, inFamous has the same problems with its game. You’re never tempted outside of the path that you chose that dramatically to the point where the game becomes drastically easier because you decided to corrupt your choices for the easy road. In fact, the game penalizes you for swaying from any path that you choose, making sure that your story should go either one of two way, good or evil, and having no grey in-between for having choices that are more realistic. You emotions shouldn’t shift and you choose to spare someone that the evil you would normally not spare, or scare the inhabitants to make sure that they stay away from you because there’s always a fight around where you are.

Your Self-Control is never broken down to the point where the grass is ever noticeably greener if you decided to be bad every now and then.

But the reason why we started talking about this in the first place, inFamous is good about instilling that emotional stress during those morally pivotal choices. It’s also the reason why you pick a side early on.

You make the decision early on to play your Paragon run, always picking the morally “right” thing to do. You pick your side when the stakes are low and the game gives you increasingly greater temptations to switching sides. Saving your girlfriend instead of a handful of doctors? How about sparing the life of the person responsible for the death of your brother because they were being blackmailed?

No matter how we feel in the moment, no matter how much we want retribution, we have a fall back to the choices that we made early on to be the North Star, to correct our moral compass when our immediate emotions get in the way.

1 Pingback